Contents

- Why Velocity Profile Decides the Required Straight Run

- Straight Length by Flow Meter Type

- ISO 5167 Lengths for Differential Pressure Elements

- Upstream Disturbance Multipliers

- Flow Conditioners as a Compensation Strategy

- Measuring Straight Length the Right Way

- Common Mistakes That Wreck Accuracy

- Short-Run Flow Meter Alternatives

- FAQ

Why Velocity Profile Decides the Required Straight Run

Every flow meter that infers volumetric flow from a velocity measurement assumes a fully developed turbulent velocity profile — a symmetric paraboloid with the peak at the pipe centerline. Anything that disturbs that profile — an elbow, valve, reducer, pump — introduces swirl and asymmetry that biases the reading by several percent. The fix is straight pipe: enough length downstream of the disturbance for friction at the wall to re-symmetrize the flow.

How much straight pipe depends on what the meter actually senses. A magnetic flowmeter integrates velocity across the whole pipe cross-section and tolerates moderate asymmetry. A vortex meter watches a single shedding point and dies on swirl. A Coriolis tube measures mass directly and does not care about profile at all. The numbers below come from the manufacturer manuals and ISO 5167.

Straight Length by Flow Meter Type

| Meter type | Upstream | Downstream | Reason |

|---|---|---|---|

| Coriolis (mass) | 0 D | 0 D | Measures mass via tube vibration; profile irrelevant |

| Magnetic (magmeter) | 5 D | 2–3 D | Integrates velocity across full cross-section |

| Ultrasonic, multi-path inline | 10 D | 5 D | Path averaging tolerates moderate distortion |

| Ultrasonic, clamp-on retrofit | 20 D | 5 D | Two-path cannot compensate for swirl |

| Vortex shedding | 15–25 D | 5 D | Single shedding point destroyed by swirl |

| Thermal mass (gas) | 15 D | 5 D | Single insertion point; needs symmetric profile |

| Turbine | 10 D | 5 D | Rotor balance depends on profile uniformity |

| Orifice plate | 10–44 D | 4–7 D | See ISO 5167-2 (β-dependent) |

| Venturi tube | 5–10 D | 4 D | Smooth contour forgives some distortion |

| V-Cone / averaging pitot | 0–3 D | 1 D | Built-in flow conditioning |

D is the pipe inside diameter. A 200 mm magmeter needs 1.0 m upstream and 400–600 mm downstream — a manageable footprint. A 200 mm vortex meter in the same line needs 3–5 m upstream and 1 m downstream. The vortex meter often loses on installation cost alone for retrofit jobs. For the legacy general rule on 10D/5D, see our companion guide on upstream and downstream straight pipe.

ISO 5167 Lengths for Differential Pressure Elements

Differential pressure elements — orifice plates, nozzles, Venturi tubes — have published straight-length tables in ISO 5167 that vary with β (throat-to-pipe diameter ratio). Excerpt for a single 90° elbow upstream of an orifice plate:

| β | Upstream (D) | Downstream (D) |

|---|---|---|

| 0.20 | 10 | 4 |

| 0.40 | 14 | 5 |

| 0.50 | 18 | 5 |

| 0.60 | 26 | 6 |

| 0.67 | 36 | 7 |

| 0.75 | 44 | 7 |

Higher β means a larger orifice bore relative to pipe size, which keeps the discharge coefficient under tighter tolerance — but only if the velocity profile is undisturbed. Pick a smaller β (say 0.45 instead of 0.65) and the straight-pipe budget drops dramatically. For Venturi tubes the numbers are smaller — 5 to 10 D upstream depending on the disturbance type — because the smooth convergent cone tolerates more profile asymmetry. See our deeper guide on the Venturi tube for the geometry and standards reference.

Upstream Disturbance Multipliers

The straight-length table above assumes one 90° elbow upstream. Other disturbances need more:

- Two 90° elbows in the same plane: 1.5× the base value

- Two 90° elbows in perpendicular planes: 2× the base (severe swirl)

- Reducer (2D long, concentric): 0.5× the base value

- Expansion (2D long): 1.0× the base value

- Fully open gate valve: 1.0× the base value

- Half-open globe or ball valve: 2–3× the base value

- Pump discharge (centrifugal): 2× the base value

The dominant offender is two elbows out of plane. The first elbow creates a centerline shift; the second adds swirl on top. Even a vortex meter that gets 25D upstream of a single elbow may need 40–50D after two perpendicular elbows. A common field fix is to insert a flow conditioner in the upstream straight section — which buys back roughly half the required length.

Flow Conditioners as a Compensation Strategy

When the available straight run is half what the meter demands, install a flow conditioner upstream of the meter and downstream of the disturbance. Three common types:

- Tube bundle (19-tube or 7-tube): kills swirl, restores symmetric profile in 4–5D. Adds 1–2 kPa pressure drop.

- Etoile or AMCA vane: radial vanes break swirl in 2–3D. Lower pressure drop than tube bundle but less effective on asymmetric profiles.

- Perforated plate (Zanker, Mitsubishi, NOVA): creates jets that recombine into symmetric profile in 8D. Standard for fiscal custody-transfer orifice metering per AGA-3.

The conditioner does not eliminate the straight-pipe budget. It compresses it. A vortex meter that would need 25D upstream of an elbow can be installed with 8D + a 1D Zanker plate + 4D, total 13D. Pressure drop is the trade — 5–20 kPa added at typical flows. For DP-element math underneath all of this, see our DP transmitter explainer.

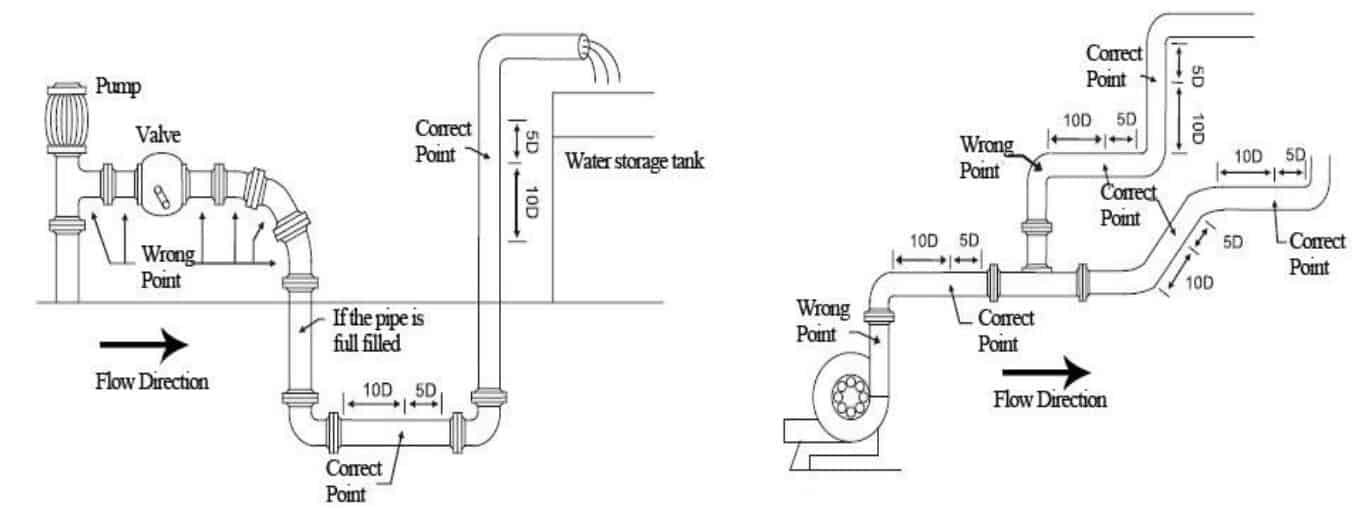

Measuring Straight Length the Right Way

Two field-measurement mistakes lose money:

- Counting from the wrong reference. Upstream length is measured from the downstream face of the disturbance (end of elbow weld, downstream of valve flange) to the front face of the meter primary element. Not centerline to centerline.

- Ignoring intermediate fittings. A tee or instrument tap inside the “straight” pipe section is a new disturbance. Restart the count.

Downstream length runs from the back face of the meter to the next disturbance. Most meters care less about downstream than upstream, but a too-short downstream run can drive cavitation back into the meter on liquid service. For installations where there is genuinely no room, our DP transmitter installation guide covers the impulse-line solutions that DP elements can use in tight spaces.

Common Mistakes That Wreck Accuracy

| Mistake | Typical bias | Fix |

|---|---|---|

| Vortex meter installed 5D after an elbow (needs 25D) | 5–15% reading error | Add 20D or install flow conditioner |

| Orifice β = 0.7 with only 10D upstream (table says 36D) | 3–8% over-read | Reduce β to 0.45 or move meter |

| Ultrasonic clamp-on right after a pump (needs 20D + conditioner) | 2–5% drift, swirl-dependent | Use inline spool-piece ultrasonic instead |

| Tee inside the “straight” section | Random 1–4% noise | Reroute tee or restart length count from tee |

| Pipe diameter at flange not matching meter bore | Edge step bias 2–4% | Use a 2D concentric reducer 5D upstream |

| Reading from centerline to centerline instead of weld faces | Apparent compliance, real 10–15% under-length | Field-measure from disturbance face |

For a quick reference on a related rule-of-thumb question — what is K-factor and why straight-pipe affects it — see our piece on flow meter K-factor.

Short-Run Flow Meter Alternatives

Magnetic Flow Meter

DN10 to DN3000 | 5D up / 3D down | ±0.5% — tolerates short straight runs on conductive liquids: water, slurry, acids.

Vortex Flow Meter

DN15 to DN300 | needs 15–25D up / 5D down | ±1% — steam, gas, condensate where Coriolis is too expensive.

Wedge Flow Meter

DN15 to DN1200 | needs only 4–6D up | ±1% — heavy oil, slurry, dirty service where orifice plate plugs.

For installations where straight pipe is truly impossible — closely spaced manifolds, retrofit jobs in cramped equipment skids — Coriolis mass flow is the safest answer. Send pipe size, fluid, flow range, available straight length, and disturbance type (elbow, valve, pump) to our engineering team via the form below and we will spec a meter for the geometry you have.

FAQ

How much straight pipe does a magnetic flow meter need?

Per the manufacturer manuals: 5 pipe diameters upstream and 2 to 3 pipe diameters downstream from the nearest disturbance. A 200 mm magmeter needs about 1.0 m straight upstream and 400–600 mm downstream. Magnetic flow meters tolerate short runs because the electrode array averages velocity across the full pipe cross-section.

What is the straight length requirement for a vortex flow meter?

15 to 25 pipe diameters upstream depending on the disturbance type, and 5 diameters downstream. A single elbow is 15D, two elbows out of plane is 25D, half-open valve is 30D+. Vortex meters react badly to swirl because they sense vortex shedding at a single bluff body and any swirl shifts the shedding frequency.

Do Coriolis flow meters need straight pipe?

No. Per ISO 10790, Coriolis meters have no straight-pipe requirement for accuracy because they measure mass directly through tube vibration and are insensitive to velocity profile distortion. The only installation rule is to keep the tubes full of liquid (no air pockets, no entrained gas).

How do you measure straight pipe length for a flow meter?

From the downstream face of the disturbance (elbow weld face, valve flange) to the front face of the meter primary element. Not centerline to centerline. Any intermediate fitting — tee, tap, reducer — restarts the count. Downstream length is measured from the back face of the meter to the next disturbance.

Can a flow conditioner replace straight pipe?

It can reduce the required straight length by 40–60%, not eliminate it. A 19-tube bundle or Zanker plate installed downstream of a disturbance restores a symmetric velocity profile in 4–8D, so a vortex meter that would need 25D upstream of an elbow can run on 8D + conditioner + 4D. The trade is 5–20 kPa added pressure drop at typical flows.

Request a Quote

Wu Peng, born in 1980, is a highly respected and accomplished male engineer with extensive experience in the field of automation. With over 20 years of industry experience, Wu has made significant contributions to both academia and engineering projects.

Throughout his career, Wu Peng has participated in numerous national and international engineering projects. Some of his most notable projects include the development of an intelligent control system for oil refineries, the design of a cutting-edge distributed control system for petrochemical plants, and the optimization of control algorithms for natural gas pipelines.